How to Build a Production-Ready RAG Assistant in 2026: A Step-by-Step Tutorial

What is a production-ready RAG assistant in 2026? A production-ready RAG assistant is an AI system that retrieves relevant, up-to-date documents at

What is a production-ready RAG assistant in 2026?

A production-ready RAG assistant is an AI system that retrieves relevant, up-to-date documents at query time, then uses them as grounded context for generation, with citations, safety controls, and monitoring. In practice, it combines retrieval quality, prompt discipline, and governance so answers stay accurate, explainable, and maintainable as knowledge changes.

The term RAG comes from the original Retrieval-Augmented Generation paper (Lewis et al., NeurIPS 2020), which combines parametric model knowledge with non-parametric memory. The core advantage is operational: you can update your knowledge base without re-training the model for every content change.

At Optijara, we treat RAG as a system design problem, not a single prompt trick. A good implementation requires clear document pipelines, strong embeddings, robust chunking, retrieval evaluation, answer-style constraints, and security controls that reduce preventable failure modes.

Why should teams use RAG instead of model-only answers?

Teams should use RAG when accuracy, freshness, and source traceability matter, because model-only responses can be fluent yet outdated or unverifiable. RAG improves practical reliability by grounding outputs in controlled documents and enabling citations, auditability, and faster updates, which are essential in legal, enterprise, healthcare, and technical support workflows.

The 2020 RAG paper reports that retrieval-augmented setups achieved state-of-the-art results on three open-domain QA tasks and produced more factual outputs than a strong parametric-only baseline. That does not eliminate errors, but it validates the architectural direction for knowledge-intensive tasks.

Developer behavior also supports this shift. In Stack Overflow’s 2024 survey, 62% of respondents reported currently using AI tools and 76% were using or planning to use them in development workflows. As AI usage grows, grounding and verification become operational requirements, not optional add-ons.

How do you design a reliable RAG architecture step by step?

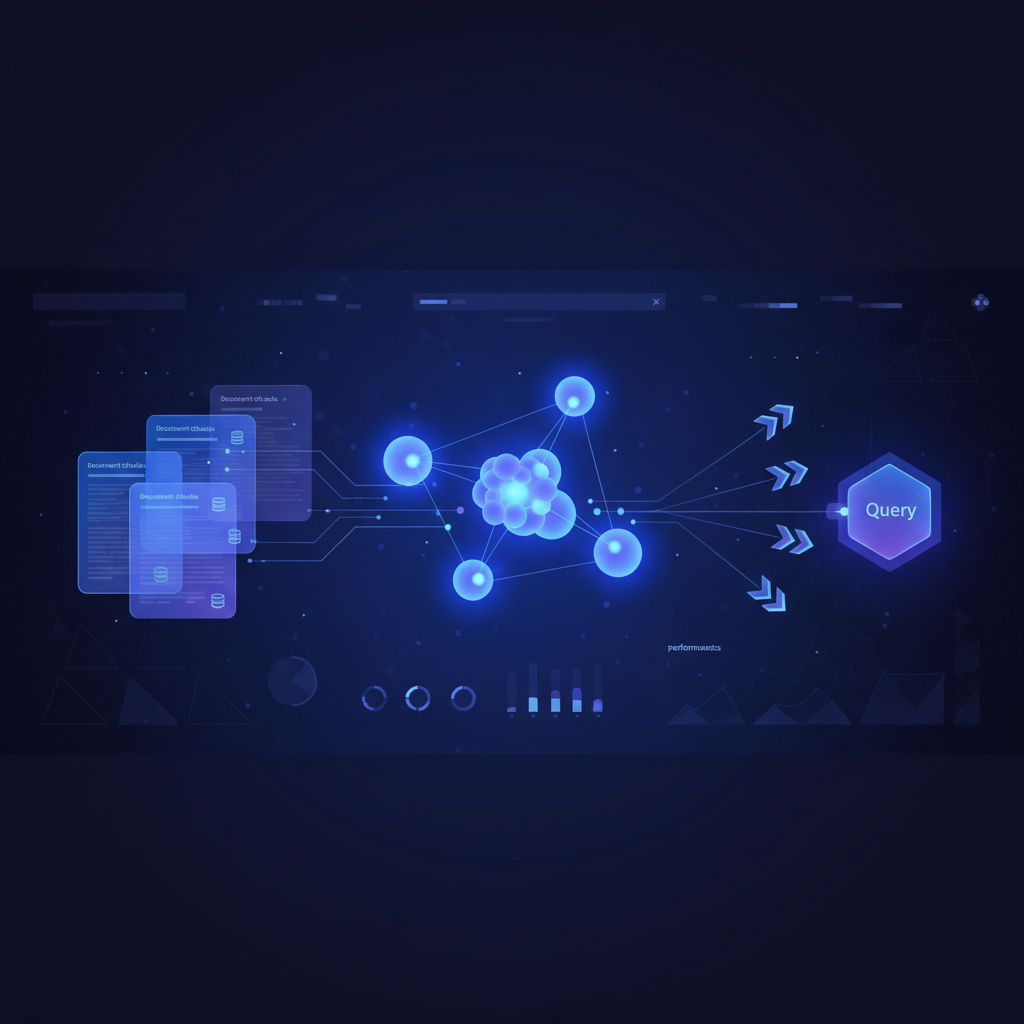

Design reliable RAG by separating concerns into five layers: ingestion, indexing, retrieval, generation, and evaluation. Each layer should have explicit contracts, metrics, and rollback paths. This modular approach prevents hidden coupling, makes incidents easier to debug, and lets teams improve components independently without destabilizing the whole assistant.

- Ingestion: Collect trusted source documents, normalize formats, remove duplicates, and track version metadata.

- Indexing: Chunk documents, generate embeddings, and store vectors with source IDs and timestamps.

- Retrieval: Use hybrid retrieval (semantic + keyword) and optional reranking for precision.

- Generation: Constrain prompts to answer only from retrieved context and cite sources.

- Evaluation: Measure retrieval quality and answer quality separately before release.

This architecture aligns with consensus guidance from NIST’s AI Risk Management Framework: trustworthiness must be designed into development, deployment, and evaluation workflows, not patched in after launch.

How should you prepare documents and chunks for best retrieval quality?

Prepare documents by preserving semantic boundaries, keeping chunks compact, and attaching rich metadata. Good chunking improves recall without flooding the model context window. A practical default is heading-aware chunking with overlap, then iterative tuning based on failed queries, not static “one-size-fits-all” token counts.

Use these document rules:

- Chunk by section headers first, then by paragraph size.

- Keep chunk length consistent (for example, 300–700 tokens) with 10–20% overlap.

- Store metadata: title, URL, language, product area, version, and update date.

- Avoid stuffing unrelated topics into one chunk; it harms retrieval precision.

- Filter boilerplate (navigation, cookie text, legal footers) before embedding.

MTEB (Massive Text Embedding Benchmark) is widely used to compare embedding quality across retrieval and related tasks. Use benchmark results as a starting point, but always validate on your own domain queries before selecting an embedding model.

What retrieval strategy works best for enterprise assistants?

For most enterprise use cases, hybrid retrieval with reranking performs best in production trade-offs: semantic search improves recall, keyword/BM25 improves exact-match precision, and reranking improves final relevance. This combination reduces brittle failures from acronym-heavy queries, policy IDs, and version-specific terminology common in internal knowledge bases.

A practical retrieval pipeline looks like this:

# 1) Semantic retrieval (top_k=20)

# 2) Keyword retrieval (top_k=20)

# 3) Merge + deduplicate

# 4) Rerank to top_k=6

# 5) Pass top contexts to generator with citation template

Start with recall-oriented retrieval, then narrow with reranking. Teams that over-optimize for latency too early often damage relevance. Improve speed after you establish minimum quality thresholds on your evaluation set.

How do you write prompts that reduce hallucinations in RAG?

Write prompts that explicitly enforce evidence boundaries: answer from provided context, cite sources, and state uncertainty when evidence is missing. The strongest anti-hallucination pattern is not “be accurate” language alone; it is structured output requirements plus refusal behavior when retrieval confidence is low.

Use a system pattern like:

You are an enterprise assistant.

Rules:

1) Use only the retrieved context.

2) If context is insufficient, say: "I don't have enough evidence in the provided sources."

3) Provide citations as [Source: title, section].

4) Separate facts from recommendations.

Then validate outputs with automated checks:

- Missing citation detector

- Claim-to-source overlap check

- Policy phrase blacklist/allowlist

- Response length guardrails for critical workflows

How do you evaluate RAG quality before going live?

Evaluate RAG with two scorecards: retrieval quality and answer quality. Retrieval metrics show whether the right evidence was found; answer metrics show whether the model used that evidence correctly. Separating these layers avoids misdiagnosis and helps teams fix the right component faster.

| Layer | Metric | Why it matters |

|---|---|---|

| Retrieval | Recall@k | Checks if relevant documents appear in top-k results. |

| Retrieval | nDCG@k | Rewards ranking quality, not just presence. |

| Generation | Faithfulness | Measures whether claims are supported by retrieved context. |

| Generation | Citation accuracy | Confirms references point to the right source spans. |

| UX | Task success rate | Captures whether users actually solve their problem. |

Build a gold test set from real user questions, including adversarial prompts and ambiguous queries. Re-run evaluations after any model, embedding, or chunking change, and block deployment if faithfulness drops below your agreed threshold.

What security controls are mandatory for a production RAG assistant?

Mandatory controls include prompt-injection defenses, output validation, least-privilege tool access, and sensitive-data protections. OWASP’s Top 10 for LLM applications highlights recurring risks such as prompt injection, insecure output handling, and excessive agency. Treat these as baseline engineering requirements, especially when assistants can trigger actions.

- Prompt injection mitigation: Separate user content from system instructions and tool schemas.

- Insecure output handling: Sanitize model outputs before rendering or execution.

- Sensitive data controls: Redact PII/secrets at ingestion and response stages.

- Access governance: Enforce role-based retrieval filters per user identity.

- Action safeguards: Add human confirmation for destructive operations.

NIST’s AI RMF and the Generative AI Profile provide a practical governance lens: map failure modes, define controls, measure residual risk, and iterate. Security is not a one-time audit; it is continuous operations.

How much does a RAG assistant cost to run, and how do you control spend?

RAG cost is driven by token usage, retrieval infrastructure, and latency targets. You can control spend by shrinking unnecessary context, using caching, and matching model size to task complexity. Start with quality baselines, then optimize cost per successful task rather than cost per request in isolation.

Cost components typically include:

- Embedding generation for indexing and document updates

- Vector database storage and queries

- Reranking model inference (if enabled)

- Generation model input/output tokens

Example pricing references should always be verified against official vendor pages before publication. For instance, OpenAI’s pricing page documents token-based rates and tool call pricing structures, and these values can change. Use scheduled checks and avoid hard-coding assumptions into public content.

What does a minimal implementation look like in code?

A minimal implementation needs just four primitives: embed documents, retrieve candidates, build a constrained prompt, and generate with citations. Keep the first version intentionally simple, then add reranking, caching, and policy checks once you can measure baseline errors with real user queries.

# Pseudocode for a minimal RAG loop

query = user_input()

q_vec = embed(query)

semantic_hits = vector_db.search(q_vec, top_k=10)

keyword_hits = bm25.search(query, top_k=10)

contexts = rerank_and_select(semantic_hits + keyword_hits, top_k=6)

prompt = compose_prompt(

query=query,

contexts=contexts,

rules=[

"Use only provided context",

"Cite every factual claim",

"If evidence is missing, say so"

]

)

answer = llm.generate(prompt)

return post_validate(answer)

In production, wrap this with observability (latency, retrieval hit rates, citation coverage), structured logs for incident review, and alerting when faithfulness or task success degrades.

How should teams roll out RAG safely in 30 days?

Roll out RAG in 30 days by sequencing scope: week one for data and test questions, week two for retrieval quality, week three for answer controls and security gates, and week four for pilot monitoring. This phased approach reduces launch risk and gives stakeholders measurable checkpoints before full deployment.

- Week 1: Define target tasks, curate trusted documents, build evaluation set.

- Week 2: Implement chunking/indexing, tune retrieval, benchmark Recall@k and nDCG.

- Week 3: Add constrained prompts, citation checks, and OWASP-aligned safeguards.

- Week 4: Run pilot with selected users, analyze failures, finalize go/no-go criteria.

This is where Optijara’s entity advantage matters: consistent architecture guidance, governance templates, and iterative optimization practices make AI assistants easier to scale across teams and regions.

FAQ: What is the difference between fine-tuning and RAG?

Fine-tuning updates model behavior through additional training, while RAG keeps the model fixed and injects external evidence at query time. Use fine-tuning for style, format, or policy behavior; use RAG for changing knowledge. Many production systems combine both, but RAG is usually the fastest path to factual freshness.

RAG is operationally cheaper to update when documents change daily. Fine-tuning can still help for consistent output structure or domain tone, but it should not be your primary mechanism for frequently changing facts.

FAQ: Can RAG eliminate hallucinations completely?

No, RAG cannot eliminate hallucinations completely, but it can significantly reduce them when retrieval quality, prompting, and validation are well designed. Failures still occur through irrelevant retrieval, weak ranking, or unsupported model inferences. Treat RAG as risk reduction architecture, not a guarantee, and keep human escalation paths for high-stakes decisions.

Your goal is measurable reduction in unsupported claims, with clear monitoring and incident response when quality drops.

FAQ: What is a good starting chunk size for enterprise documents?

A practical starting range is 300–700 tokens with moderate overlap, then tune by query performance. Smaller chunks can improve precision but hurt context completeness, while larger chunks may dilute relevance. Evaluate chunk size against your own dataset and questions rather than copying generic defaults from tutorials.

Heading-aware chunking often outperforms fixed-size splitting because it preserves meaning boundaries that retrieval models and rerankers can exploit.

FAQ: Which metrics should leadership track weekly?

Leadership should track task success rate, citation accuracy, unresolved query rate, latency percentile, and cost per successful task. These metrics align business outcomes with technical quality and operational efficiency. Monitoring only token spend or only model accuracy creates blind spots that can hide reliability or trust problems.

Keep one dashboard for executives and one for engineering depth. Shared definitions prevent conflicting interpretations across teams.

Sources

- Lewis et al. (2020), Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks

- NIST AI Risk Management Framework (AI RMF)

- OWASP Top 10 for LLM Applications / GenAI Security Project

- MTEB: Massive Text Embedding Benchmark (GitHub)

- Stack Overflow Developer Survey 2024 — AI section

- OpenAI API Pricing

Written by

Optijara AI