Generative Engine Optimization (GEO): How to Get Cited by ChatGPT and Perplexity in 2026

Discover actionable Generative Engine Optimization (GEO) tactics.

What is Generative Engine Optimization (GEO)?

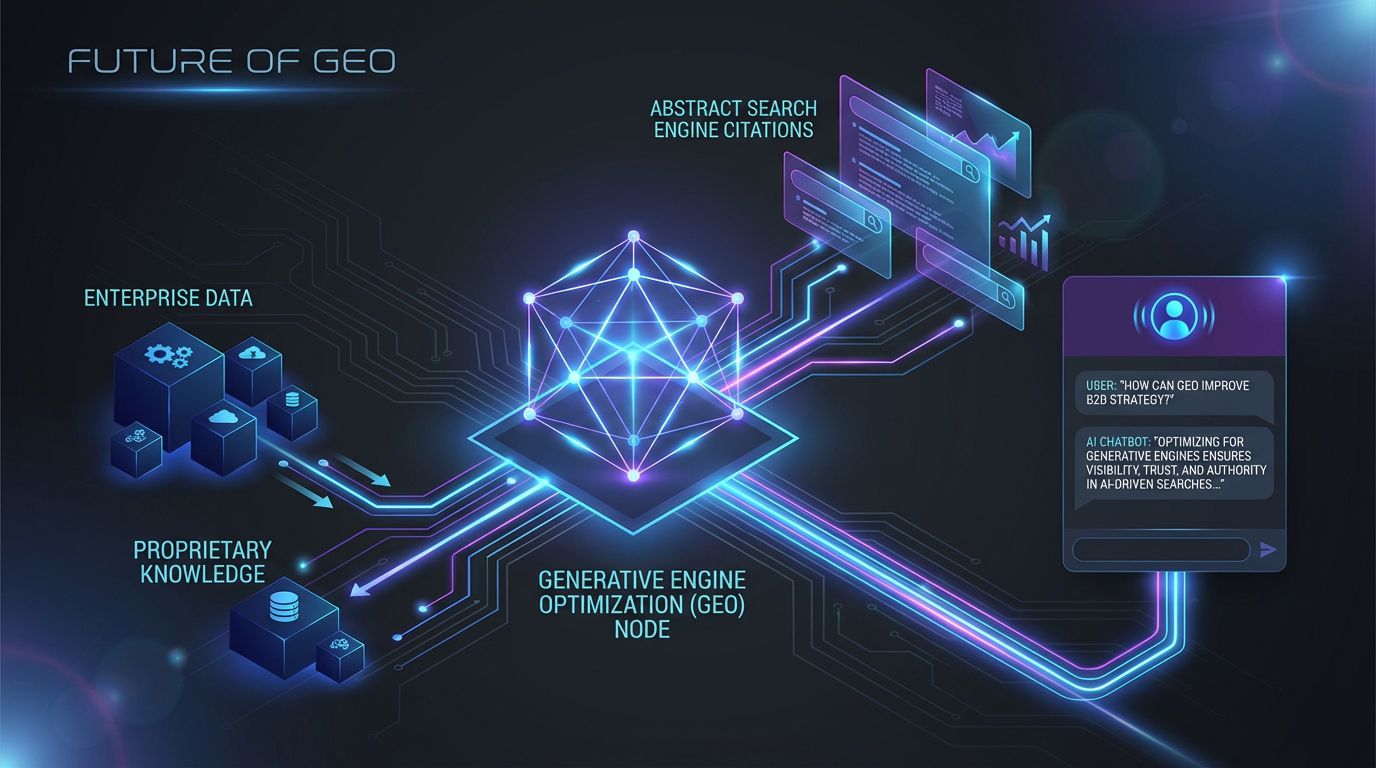

Generative Engine Optimization (GEO) is the biggest shift in digital marketing since the original search algorithm. The digital landscape has moved away from traditional search interfaces. Users no longer type fragmented keywords into a search bar to get ten blue links. They interact with AI systems through conversational queries to get direct, contextual answers. Users demand immediate knowledge, not a list of hyperlinks. GEO is the practice of structuring, writing, and configuring content so AI models like OpenAI (and ChatGPT) and search engines like Perplexity prioritize your information and cite your brand in their responses.

Modern AI search relies on Retrieval-Augmented Generation (RAG). Large language models used to be limited by static training data. If an event happened after a training cutoff, the model wouldn't know about it. RAG solves this by letting the AI browse the live internet, retrieve relevant documents based on a prompt, and inject that context into its reasoning before generating an answer. GEO is the strategy of shaping your digital footprint so your content is exactly what these models retrieve and favor during the RAG process.

Being cited by an AI engine today is the equivalent of holding the number one ranking on a legacy search engine a decade ago. The mechanics of securing a Perplexity citation are completely different. AI systems don't care about keyword repetitions on a landing page, and they aren't tricked by superficial link-building campaigns. They act as digital researchers, looking for factual accuracy, semantic depth, logical structure, and authoritative consensus. When a user asks an AI to recommend a product or explain a complex condition, the engine scours the internet for the most credible, dense, well-structured information.

A successful GEO strategy blends enterprise data architecture with journalistic content creation. Marketing teams must think like data scientists and write like subject matter experts. The goal isn't just attracting clicks to a webpage anymore, it is injecting your brand into the systems that mediate human access to information. As AI becomes the default interface for the internet, mastering ChatGPT optimization is a baseline requirement for brand visibility.

Traditional SEO vs. Generative Engine Optimization

Moving from traditional SEO to GEO changes marketing metrics, content strategies, and technical priorities. For over two decades, digital marketers operated under rules dictated by crawler-based search algorithms. These legacy algorithms were essentially sophisticated filing cabinets. They matched queries to documents based on signals like keyword density, meta tags, and inbound links. The main objective was ranking high on a search engine results page (SERP) to capture clicks.

GEO discards many of these assumptions. While traditional SEO focuses on keyword placement for web crawlers, GEO prioritizes semantic depth and factual accuracy for large language models. AI search engines process information semantically. They don't just look for matching words, they try to understand the actual meaning, context, and factual value of the content. Because these systems synthesize answers directly within a chat interface, the traditional click-through rate is no longer the ultimate measure of success. The focus shifts to brand visibility, citation frequency, and algorithmic trust.

Here is how the two disciplines compare across core marketing functions.

| Core Marketing Function | Traditional SEO Strategy | Generative Engine Optimization (GEO) Strategy |

|---|---|---|

| , , , , , , , , , , , , - | , , , , , , , , , , , , , | , , , , , , , , , , , , , , , , , , , , , , , - |

| Primary Business Goal | Securing top positions on the visual search engine results page | Earning explicit citations and brand mentions in AI conversational outputs |

| Key Performance Metric | Organic website traffic, click-through rates, and bounce rates | Share of voice in AI responses, citation frequency, and sentiment alignment |

| Content Creation Focus | Keyword targeting, search intent matching, and optimal word counts | High factual density, unique statistical claims, and comprehensive semantic depth |

| Authority Building | Acquiring high quantities of inbound backlinks from external domains | Establishing strong entity associations and providing highly verifiable expert claims |

| Technical Requirements | Optimizing Core Web Vitals, page load speed, and XML sitemaps | Implementing precise schema markup, semantic HTML, and machine-readable data structures |

| User Journey Dynamics | Users clicking through multiple different websites to gather information manually | Users receiving a complete, synthesized answer immediately within the chat interface |

The old playbook relies on manipulating signals that proxy for quality, whereas the new playbook requires delivering actual informational value. In the traditional model, a marketer might publish a superficial article targeting a high-volume keyword and prop it up with purchased backlinks. In the generative era, this strategy fails. When an AI search model processes a superficial article during live retrieval, it recognizes the lack of unique information, discards the document, and cites a more comprehensive source instead.

Domain authority has also evolved. Legacy search engines relied on link graphs to determine trust, but AI evaluates trust through factual consistency and entity recognition. If your brand is consistently associated with accurate data, unique insights, and recognized industry entities across multiple sources, the AI model develops a higher confidence score for your content. A relatively new website with dense, structured, and unique information can easily outcompete a massive legacy domain in a generative search result.

How AI Search Engines Decide What to Cite

Unlike legacy search engines that rely on pre-computed indexes and static ranking factors, AI search engines execute a dynamic, multi-step process in real time every time a user submits a query. This filters out noise, evaluates credibility, and synthesizes the most accurate response. To be the chosen source, you must optimize for each stage of this retrieval pipeline.

First, the AI handles query expansion and intent interpretation. When a user asks a complex question, the model doesn't just search for the exact words in the prompt. It uses its neural network to understand semantic intent. It breaks the query down into core concepts, identifies related entities, and often rewrites the prompt into distinct search queries to run simultaneously across its web browsing tools. This makes exact-match keywords ineffective. The AI wants comprehensive answers covering the entire conceptual neighborhood of the prompt.

After retrieving potential source documents, the system filters and scores them for credibility. AI models discard pages that lack information gain and select sources with high factual density. They look for information gain, a mathematical measurement of how much new, unique, or specific data a document contains compared to the rest of the dataset. Content that regurgitates common knowledge gets a low score and is discarded. Content with original statistics, expert quotes, or specific technical details gets a high score and moves to the final synthesis stage.

In the generation and citation allocation stage, the AI model loads the highest-scoring documents into its active memory window and begins writing the response. As it generates text, the model cross-references its output with the source documents. If it relies heavily on a specific paragraph or data point from your website, it appends a citation to that sentence. Platforms are incentivized to provide accurate citations to avoid hallucinations and build user trust.

According to major technology research firms like Gartner, citation reliability is the primary metric enterprise users use to evaluate AI tools. Models favor sources that present information in a clear, logically structured, and easily extractable format. If your data is buried in a wall of unstructured text, the model might struggle to extract it confidently. It will choose a competitor's site that presents the same information in a clean, parsable table or bulleted list. To win the citation, your content must provide a high confidence signal through structural clarity and factual density.

5 Proven Tactics to Optimize Content for ChatGPT and Perplexity

The algorithms behind ChatGPT and Perplexity are efficient at identifying high-value information. To ensure your assets are chosen over competitors, your content needs to align with the ingestion preferences of large language models. Here are five tactics to optimize your content for generative engines.

- Maximize Factual Density: This refers to the ratio of hard facts, data points, and concrete entities to the overall word count. AI models aggressively filter out marketing fluff, anecdotal filler, and repetitive transition sentences. Every paragraph should be loaded with specific names, dates, percentages, technical terms, and verifiable claims. Instead of writing that a software product is "very fast and popular," state that it "processes 100,000 transactions per second and is utilized by 45 percent of Fortune 500 financial institutions." A dense concentration of facts increases the likelihood that an AI will extract your sentence.

- Employ Direct Answer Structuring (The Inverted Pyramid): AI retrieval mechanisms operate under strict latency constraints. They have fractions of a second to scan a document, identify relevant information, and determine if it answers the prompt. Adopt an inverted pyramid writing style. When addressing a specific topic, provide the most direct, concise, and definitive answer in the very first sentence. Do not build up to the answer with long introductions. State the core fact immediately, then use subsequent sentences to provide context, supporting data, and nuanced explanations. This lets the AI extract the core answer without parsing complex narrative structures.

- Publish Original Data and Unique Statistical Claims: Large language models suffer from data homogenization. Because they are trained on the same massive corpus of public internet data, they struggle to find truly unique insights. When a live browsing mechanism encounters a fresh, proprietary data set that doesn't exist anywhere else in its training weights, it heavily prioritizes that source. Conducting original surveys, publishing internal data, or running unique experiments provides the AI with high-value information gain. If your website is the sole originator of a compelling statistic, an AI model discussing that topic will have to cite your domain as the primary source.

- Optimize for Quotation and Expert Attribution: Perplexity and ChatGPT highly value authoritative consensus. They frequently look for direct quotes from recognized subject matter experts to validate their claims. Format your content to include clear, standalone, and specific quotes attributed to notable individuals in your organization. Use standard semantic formatting like blockquotes and ensure the person's full name, job title, and company are stated right next to the quote. The AI will parse this structure and frequently lift the entire quote and brand attribution directly into its final output.

- Map Conversational Contexts Instead of Keywords: Traditional SEO focused on mapping single keywords to single landing pages. GEO requires mapping complex conversational contexts to comprehensive content hubs. Users interact with AI through follow-up questions and extended dialogues. Your content must anticipate these follow-ups. If you write a guide about a new financial regulation, you need to explain what the regulation is, how it impacts small businesses, the compliance deadlines, and what software tools can help manage it. Covering the multidimensional scope of a topic on a single page makes you a one-stop source the AI can rely on for a multi-turn conversation.

Structuring Enterprise Data for AI Consumption

Factually dense writing is the foundation of GEO, but technical presentation is equally critical. Enterprise websites often contain vast amounts of valuable data. If that data is locked behind complex JavaScript rendering, poorly structured HTML, or convoluted site architectures, AI bots will simply ignore it and move on to easily parsable competitor sites. Structuring enterprise data for AI consumption requires a machine-readable environment that allows large language models to ingest your knowledge base with zero ambiguity.

Implement semantic HTML and structured data aggressively. AI crawlers do not look at websites visually. They parse the Document Object Model (DOM) to understand the hierarchy and relationship of the information. Using proper HTML5 tags ensures the bot understands exactly which part of the page contains the main article, navigation, or author information. Enterprise sites must utilize comprehensive schema markup using vocabularies from organizations like Schema.org. Wrapping your content in JSON-LD structured data explicitly tells the AI what entities are on the page. You can define products, reviews, leadership, event dates, and FAQ structures in a machine language that eliminates the need for the AI to guess the context.

Advanced enterprise optimization requires the development and public exposure of custom Knowledge Graphs. A Knowledge Graph is a structured representation of the real-world entities related to your business and their relationships. Defining these relationships strictly (like stating programmatically that Product X is a solution for Industry Y and is manufactured by Company Z) feeds the AI internal reasoning engine. When an AI model detects a tightly organized Knowledge Graph, it elevates the trust score of the entire domain. The bot recognizes the information isn't a random collection of web pages, but a verified, organized database of factual assertions.

Enterprises are also bypassing traditional HTML scraping entirely by exposing their data directly to AI platforms through dedicated Application Programming Interfaces (APIs). As platforms like ChatGPT expand their plugin and action ecosystems, they increasingly prefer to pull data directly from structured JSON feeds rather than parsing raw web pages. Offering a clean, read-only API endpoint containing your latest product catalogs, research reports, or public data sets guarantees that AI models have instantaneous access to your most current information. This technical strategy removes the friction of web crawling and ensures your brand is represented accurately whenever a relevant query is processed.

Measuring Success in the Era of AI Search

The shift to GEO changes how marketing departments track analytics and measure return on investment. Traditional metrics like organic sessions, keyword rankings, and bounce rates are losing their relevance. When a user receives a comprehensive answer directly within the interface of ChatGPT or Perplexity, they have no reason to click a link and visit your website. This zero-click reality means tracking website traffic is no longer an accurate proxy for brand visibility. Measuring success requires advanced tools and new key performance indicators tailored for AI search.

The primary metric of success in GEO is Share of Model (SoM) or Share of Conversation. This metric evaluates how frequently your brand, products, or unique data points are cited by AI models when users ask category-level questions. To track this, marketers deploy automated prompt-testing scripts. These scripts query major AI engines with hundreds of variations of industry-relevant prompts and analyze the generated outputs. By parsing these outputs, brands calculate the percentage of times they are mentioned or hyperlinked compared to competitors. If an enterprise software company asks an AI "What are the best cybersecurity tools for hospitals?" and their product is mentioned in 60 percent of the generated responses, they have a strong Share of Model.

Tracking referral traffic from AI platforms is still necessary, but it requires sophisticated server-side analytics. Major AI engines often strip out standard referral headers when users click links within their chat interfaces, making the traffic appear as generic direct traffic. To combat this, marketing teams must utilize log file analysis to identify specific user agents associated with AI crawlers and bots. Platforms like Ahrefs and other modern SEO toolsets have developed specialized tracking mechanisms to identify traffic originating from generative interfaces. Isolating this traffic segment lets teams analyze the behavior of users who click through, who often possess a higher intent to convert because the AI has already pre-qualified the recommendation.

Measure the impact of GEO through brand sentiment and entity association analysis. Modern marketing requires using secondary AI tools to ingest generated responses about your brand and perform sentiment scoring. It isn't enough to just be cited. You have to ensure the AI cites your brand accurately and in a positive context, reflects your latest product features, and associates your company with high-quality service. By continuously monitoring the semantic web and measuring the qualitative nature of AI citations, brands can iterate on their content strategies, correct factual inaccuracies, and ensure they are visible in conversational interfaces.

Key Takeaways

- Generative Engine Optimization (GEO) focuses on getting cited by AI models rather than ranking on traditional search pages.

- Structuring data clearly with direct Q&A formats increases citation likelihood.

- Moving from SEO to AEO/GEO requires prioritizing factual density and authoritative source linking.

- AI search engines rely on robust retrieval mechanisms that prioritize clear, un-gated, and well-structured enterprise data.

- Measuring success involves tracking brand mentions and referral traffic from AI platforms.

Conclusion

As we move further into 2026, relying solely on traditional SEO is no longer enough. By adopting Generative Engine Optimization (GEO), you ensure your enterprise remains visible, credible, and frequently cited across the most critical AI platforms. Start optimizing your data today, or risk being left out of the AI conversation entirely. Ready to implement a robust GEO strategy? Contact us today to learn how our experts can elevate your brand's AI search presence.

Frequently Asked Questions

What is the difference between SEO and GEO?

SEO focuses on ranking pages on search engines via keywords and backlinks. GEO (Generative Engine Optimization) focuses on structuring content so AI models cite it as a source in their generated answers.

How do I optimize content for Perplexity?

Use clear Q&A formats, provide high factual density, ensure your site is crawlable by their bot, and cite authoritative sources.

Will traditional SEO die because of AI?

No, but it is evolving. Traditional SEO will share the stage with GEO, requiring marketers to optimize for both human searchers and AI retrieval engines.

Sources

- https://www.gartner.com/en/newsroom/press-releases/2024-02-19-gartner-predicts-search-engine-volume-will-drop-25-percent-by-2026-due-to-ai-chatbots

- https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-economic-potential-of-generative-ai

- https://www.perplexity.ai/hub/blog/perplexity-for-enterprise

- https://openai.com/enterprise

- https://searchengineland.com/generative-engine-optimization-geo-what-you-need-to-know-438089

Written by

OptijaraHamza Diaz is the founder of Optijara, where he builds practical AI agents, automation systems, and Copilot workflows for service businesses. He writes about AI operations, agent strategy, and real-world implementation for teams that want usable systems instead of hype.