The trillion dollar AI infrastructure buildout: what developers need to know

NVIDIA doubled its AI chip demand forecast to one trillion dollars at GTC 2026. Hyperscalers plan 720 billion in combined capex this year. Here is what the infrastructure buildout means for developers and founders.

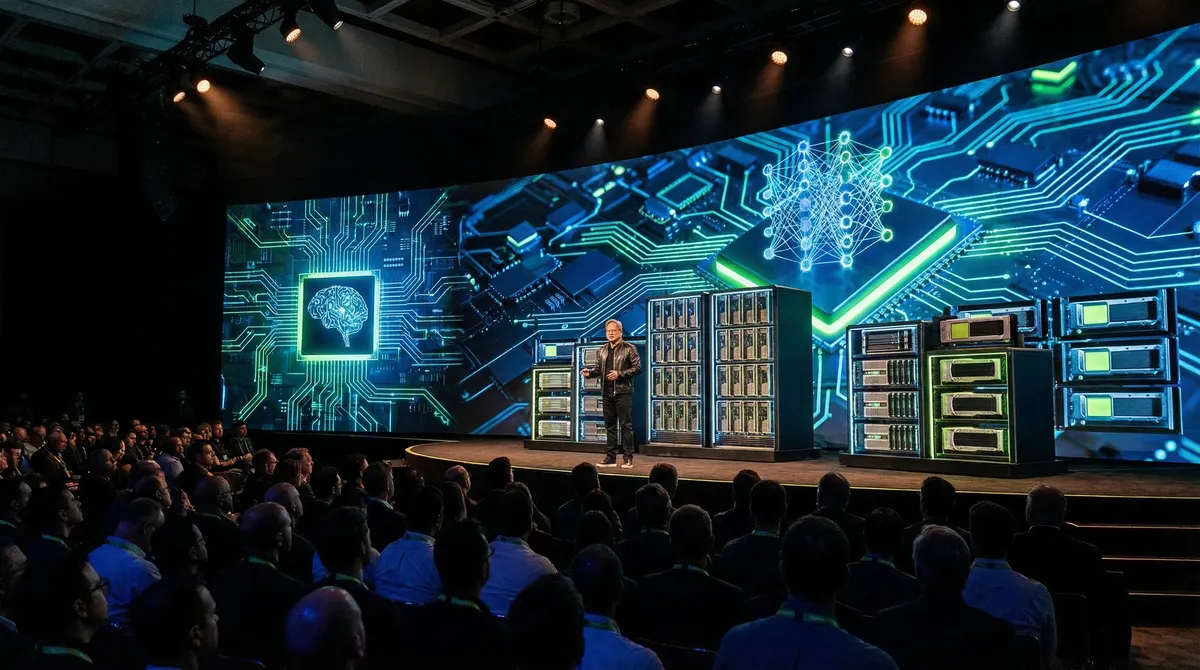

At GTC 2026 on March 17, NVIDIA CEO Jensen Huang doubled his company's demand forecast: "$1 trillion through 2027 at least," he said. "In fact, we are going to be short. I am certain computing demand will be much higher than that." The previous estimate, made during NVIDIA's February earnings call, was $500 billion. In one month, the projected opportunity doubled.

This is not an isolated signal. The four largest hyperscalers — Alphabet, Meta, Microsoft, and Amazon — have collectively guided toward $700 billion in capital expenditure for 2026. Meta alone signed a $27 billion infrastructure deal with Nebius and plans up to $135 billion in AI-related capex this year. Amazon guided $200 billion, $50 billion more than analysts expected.

For developers, founders, and technical leaders, these numbers translate into real shifts: where compute is available, what it costs, how applications get built, and which infrastructure decisions matter most in the next 12-18 months.

How big is the AI infrastructure buildout?

The combined hyperscaler AI capex for 2026 approaches $720 billion at the high end of guidance ranges. To put that in context, this figure rivals Sweden's GDP and exceeds twice the inflation-adjusted cost of the entire Apollo program. NVIDIA's fiscal year 2026 revenue reached $215.9 billion, up 65% year-over-year, with data center revenue alone at $62.3 billion for Q4.

Global IT spending is projected to exceed $6 trillion for the first time in 2026, according to Gartner. AI workloads are the primary growth driver, with 42% of organizations citing AI workflow optimization as their top spending priority.

Where the money is going

The spending breaks down across several categories that directly affect the developer ecosystem:

GPU and accelerator procurement. NVIDIA's Blackwell and Rubin AI chips remain the primary targets. The $1 trillion forecast reflects demand for inference (running trained models in production) as much as training. Jensen Huang specifically highlighted the shift toward inference workloads as the larger revenue opportunity.

Data center construction. New facilities are being built at unprecedented scale. Meta's $27 billion deal with Nebius covers AI infrastructure deployment across multiple regions. Microsoft, Amazon, and Google are each building campus-scale facilities designed specifically for AI workloads, with custom cooling systems and power delivery architectures.

Power infrastructure. AI data centers consume significantly more electricity than traditional cloud facilities. This has created a parallel infrastructure buildout in energy: new power generation, grid upgrades, and on-site generation facilities. The energy demand is substantial enough that NVIDIA announced space-based computing concepts at GTC 2026, and Elon Musk has discussed orbital computing as a potential solution to terrestrial power constraints.

Networking and interconnect. High-bandwidth connections between GPU clusters and between data centers are a growing cost center. NVIDIA's NVLink and InfiniBand technologies address intra-cluster communication, while hyperscalers invest in dedicated fiber networks between regions.

The inference economy: a structural shift

NVIDIA's $1 trillion forecast specifically targets the inference opportunity. Training a large model is a one-time (or periodic) cost. Running that model at scale — inference — is ongoing and scales with user demand.

This shift matters for developers and founders for several reasons:

| Aspect | Training era | Inference era |

|---|---|---|

| Cost structure | Upfront, batch | Ongoing, per-request |

| Hardware priority | Raw compute | Latency + throughput |

| Scaling concern | Model size | Concurrent users |

| Developer impact | Few teams train models | Every app runs inference |

| Business model | Research-driven | Usage-driven |

The inference economy means that the cost and availability of running AI models in production directly determines which applications are economically viable. As infrastructure scales, inference costs drop, enabling new categories of applications that were previously too expensive to operate.

What this means for developers

Compute is becoming more accessible, not less. Despite the staggering capex numbers, the buildout is increasing supply faster than demand for many workload tiers. Serverless inference APIs from cloud providers, specialized inference endpoints from model vendors, and edge deployment options are all expanding. Developers building applications on top of foundation models will see improving price-performance ratios through 2026 and 2027.

Multi-cloud and multi-provider strategies are now essential. No single hyperscaler can absorb the full demand. Developers should architect applications to work across providers, using abstraction layers that allow switching between inference endpoints. This is especially important for startups that may not want to commit to a single vendor's pricing trajectory.

Edge inference is accelerating. Not all workloads need data center GPUs. Apple Silicon, Qualcomm's AI accelerators, and NVIDIA's Jetson platform are enabling meaningful inference at the edge. For latency-sensitive applications (real-time translation, autonomous systems, on-device assistants), the infrastructure buildout includes local compute, not just cloud scale.

The model-to-infrastructure ratio is shifting. As infrastructure scales, the bottleneck shifts from "can we run this model?" to "can we run it efficiently?" Techniques like quantization, distillation, speculative decoding, and mixture-of-experts architectures become core developer skills rather than research topics.

What this means for founders

AI infrastructure costs are now a primary line item. For any startup building on AI, compute costs are likely the largest or second-largest expense category. Understanding how to optimize inference costs — model selection, batching strategies, caching layers, and provider negotiation — is a core competency, not an optimization exercise.

The window for AI-native startups is open but narrowing. The hyperscaler buildout creates massive capacity that will eventually commoditize many AI capabilities. Startups that build defensible differentiation through proprietary data, domain-specific fine-tuning, or novel application architectures have a window now, while the infrastructure is still being deployed. Competing on raw model capability against companies spending $100+ billion annually is not a viable strategy.

Geographic distribution matters. The infrastructure buildout is not uniform. The United States, parts of Europe, and select Asian markets are receiving the bulk of new capacity. Founders targeting emerging markets should account for higher latency and potentially higher costs for AI workloads in those regions.

Token economics: a preview of how AI reshapes compensation

At GTC 2026, Jensen Huang introduced a concept that signals how deeply AI infrastructure is penetrating organizational operations: paying engineers in AI tokens as a supplement to base salary.

"Every single engineer in our company will need an annual token budget," he said. "They're going to make a few hundred thousand a year as their base pay. I'm going to give them probably half of that on top of it as tokens so that they could be amplified 10 times."

At the described levels, engineers would have access to billions of tokens annually. Huang framed this as a competitive recruiting tool: "It is now one of the recruiting tools in Silicon Valley: how many tokens come along with my job."

Whether or not token compensation becomes widespread, the underlying logic is significant. Companies are beginning to treat AI compute as a productivity multiplier that has quantifiable value per employee. This has implications for how organizations budget, how they measure developer productivity, and how they structure compensation packages in competitive markets.

Risks and open questions

Is this a bubble? Microsoft CEO Satya Nadella and investor Michael Burry have both flagged AI investment excess as a concern. The counterargument: NVIDIA's revenue growth (65% YoY to $215.9 billion) demonstrates that demand is real and current, not speculative. The risk is not that demand does not exist, but that supply buildout may overshoot in specific market segments.

Energy sustainability. The power consumption of AI data centers is a genuine constraint. The industry is investing in nuclear, solar, and grid upgrades, but the timeline for new power generation does not always match the timeline for new data center deployment.

Concentration risk. NVIDIA controls the majority of the AI accelerator market. AMD has struggled to close the gap despite Meta's $100 billion chip deal discussions. This concentration creates supply-chain risk for the entire ecosystem and keeps pricing power firmly with NVIDIA.

Key takeaways

- NVIDIA doubled its AI chip demand forecast to $1 trillion through 2027, up from $500 billion just one month earlier

- The four largest hyperscalers plan approximately $700-720 billion in combined AI capex for 2026

- The spending is shifting from training to inference, which directly affects application economics

- Developers should architect for multi-provider flexibility and invest in inference optimization skills

- Founders need to treat AI compute costs as a core competency, not an afterthought

- Token-based compensation is emerging as a recruiting differentiator in Silicon Valley

Frequently Asked Questions

Why did NVIDIA double its demand forecast so quickly?

The increase from $500 billion to $1 trillion reflects the shift toward inference workloads. Training creates one-time demand, but inference scales with every application user. As more production applications adopt AI features, inference demand is growing faster than previous models projected.

How does the hyperscaler spending affect startup founders?

It increases available compute capacity, which generally reduces inference costs over time. However, it also means hyperscalers will offer more built-in AI capabilities in their platforms, potentially commoditizing features that startups currently charge for. Founders should build defensible moats through proprietary data or unique application architectures.

Is AI infrastructure spending a bubble?

Current evidence suggests demand is real: NVIDIA posted $215.9 billion in revenue with 65% growth, and hyperscalers report strong return on AI investments. The risk lies in potential over-supply in specific segments or a slowdown in enterprise AI adoption that does not match the infrastructure buildout pace.

What should developers learn to prepare?

Focus on inference optimization: quantization, model distillation, speculative decoding, and efficient batching. Understanding multi-cloud deployment and cost optimization across providers will be increasingly valuable as the infrastructure landscape expands.

Will inference costs continue to drop?

Yes, based on historical trends and the scale of current investment. More supply, better hardware (like NVIDIA's Rubin architecture), and software optimizations (mixture-of-experts, efficient attention mechanisms) should continue driving costs down through 2026-2027.

Sources

- https://www.reuters.com/world/asia-pacific/nvidia-ceo-set-reveal-new-chips-software-ai-megaconference-gtc-2026-03-16/

- https://fortune.com/2026/03/17/jensen-huang-ai-infrastructure-buildout-1-trillion-dollars/

- https://www.cnbc.com/2026/03/16/meta-nebius-ai-infrastructure.html

- https://blogs.nvidia.com/blog/state-of-ai-report-2026/

- https://finance.yahoo.com/news/hyperscalers-spending-nearly-700-billion-115600158.html

- https://www.fool.com/investing/2026/03/17/big-tech-is-spending-720-billion-on-ai-in-2026-and/

- https://www.fool.com/investing/2026/03/14/it-spending-will-exceed-6-trillion-for-the-first-t/

Written by

Optijara